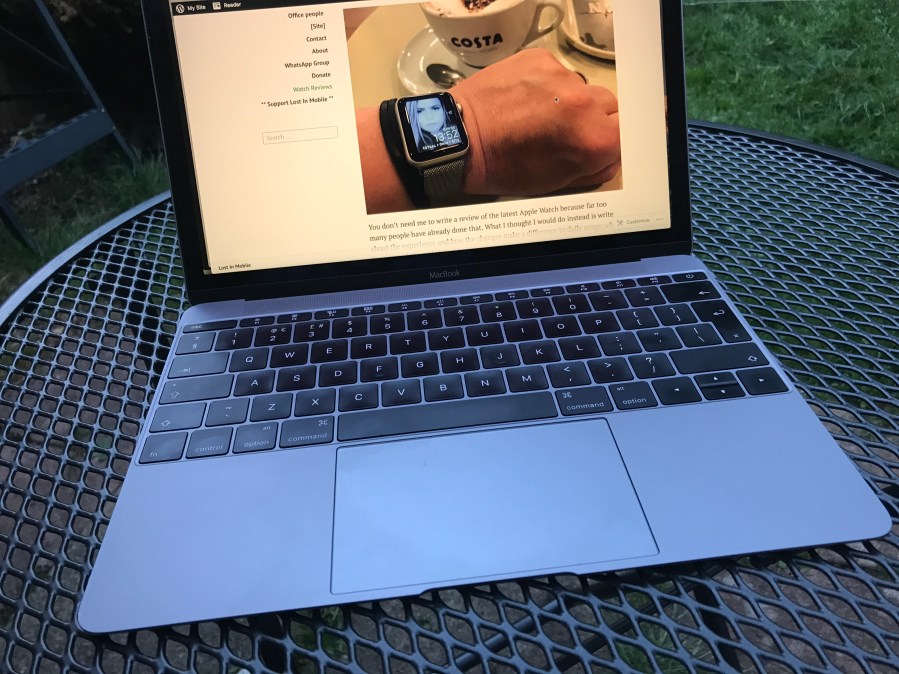

People jokingly call the MacBook the ‘adorable’ because of how it looks and I get that. It is without doubt the best looking MacBook available today and arguably the best looking Apple device of all.

In the few days since I have owned mine, the iMac has sat alone in the corner waiting to be turned on so that it can make that clicking noise of death that means it is about to explode. In the few days since the MacBook, I have realised that I can saved it the pain and will almost certainly be using the MacBook for the foreseeable future.

I do have some concerns over the longevity of the specs and the fact that it is technically not a computers designed for many years of use where the hard drive gets filled up and where it becomes a home for a person’s digital life over half a decade. It is designed to be used anywhere and to let me do what I need to, and in that regard it is doing a sterling job.

The keyboard in particular is a revelation. I have been using Apple keyboard for years now and I find myself preferring this one to the iMac offering. I have always like shallow keyboard and find the fascination some have with high travel clicky keyboards quite bizarre and I am finding myself to be even less prone to mistakes on the MacBook keyboard than I have been on others.

Battery performance could be better, but at the moment a charge every 3 days is OK. It is supposed to manage 1,000 cycles and this second-hand model has only done 78 so far which should mean another 2,766 days of use in terms of the battery. The 256GB of space could be a bigger barrier, but I’m starting to wonder if that will be the case.

I am writing a big freelance article at the moment and the entire thing, all screenshots etc, is being saved in Dropbox as I go which means less space used on the MacBook. I will also get into a regime of clearing out stuff once a month and with a bit of luck this little computer could be all I need for what I do.

Maybe we are finally reaching that point where the computer can be almost dumb with the content in the cloud and it not feel clunky at all. Then again, the MacBook is far from dumb either.

Categories: Articles

Yeah, to me there’s a wide, if sometimes fuzzy, line between “I have an extra copy of everything on the cloud” and “this hardware ain’t much good unless you’re on wifi right now”. (And assuming your service provider keeps supporting it, especially if you’re paying “rent” to one.)

LikeLike

Sorry if I’m being picky, but computers, at least so far, are by definition dumb. They can only count to 1. They can make a one a zero and vice versa. And they “know” that 0+0=0, 0+1=1, 1+0=1, and 1+1=0 carry a 1 to the next bit. But they can do all that very very fast in devices that are very very small.

Also consider how far computers have come in terms of size and speed. Your MacBook would have been a supercomputer 15 years ago.

LikeLiked by 1 person

@Bob do you have an opinion on Searle’s “Chinese Room” experiment? (Just finished rereading Dennett’s “Consciousness Explained”)

LikeLike

Also, I think a Macbook is not quite a 2002 era Supercomputer. 1992… maybe? 1982 definitely.

LikeLike

This article gives an estimate of 102 Gflops/s for a 2008 MacBook Pro.

https://www.macobserver.com/tmo/article/The_Fastest_Mac_Compared_to_Todays_Supercomputers

Doing some very rough checking, an early 2016 MacBook is about double the 2008 MacBook Pro based on this https://browser.primatelabs.com/mac-benchmarks.

I know it doesn’t necessarily translate exactly, but if the MacBook is about 200 Gflops/s, then it fits into the top 500 supercomputers of 2002 at say about 400 based on this https://www.top500.org/list/2002/11/?page=4

LikeLike

You make a better case than I woulda guessed, but like you partially acknowledge, there’s more to “supercomputers” than FLOP-ish counts; throughput Input/Output especially.

I guess I’m struck that I don’t think of my gear now as doing much that my 2002 stuff couldn’t.

The most fun thing to chart the change of is games – it’s amazing to see my iPad Mini run GTA3… but the visual gap between GTA3 (2001) and IV (2008) is the big one. IV looks essentially modern, and you don’t get the idea that the developers are fundamentally constrained, but with the “3” era, it’s all too obvious how the sausage is being made.

LikeLike

Certainly there’s more to it than Gflops/s. Some of the articles talked about graphics performance. You’re right in that your old gear could do much the same as your current gear, just a lot slower. And of course, we didn’t want to wait. And then then there’s all the other stuff. Input, output, memory, graphics, etc.

LikeLike

I had to refresh my memory on the Chinese Room experiment. It attempts to differentiate between understanding versus pre-programed.

It depends on what you consider the human brain to be. Without getting into the areas of the soul or consciousness, I see the brain as an immensely complex biochemical computer. On the other side, some of the AI learning programs are bordering on “understanding”. For example, an AI can learn about round objects by example, and then extend that example to objects it has never seen (or sensed). Does the AI “understand” what a round object is, or is it going by example and comparison. I could ask the AI what round is and I might get a series of examples. But if the programming is there, I could ask it for the defining qualities of a round object. In other words how it has learned what round is.

Now think about what you would answer to the same question. And think about how a child learns what round is. I venture to say that they are very similar. And a child learns that the concept of round versus straight uses specific language. Because if the child does not use that specific language, he or she can’t communicate that concept to others.

That’s fine for concrete things, but how does a child learn less concrete concepts. Actually in many, if not most cases through a similar process. That process is learning by being “programed” or by trial and error.

I believe that AI has the potential to become what we would call intelligent along with the understanding and will even be taught what is right and wrong, good and evil. Whether it/he/she will have a soul, I leave to the philosophers and theologians.

LikeLike

I think we’re not too far apart on this stuff. I was struck by the stridency of “computers, at least so far, are by definition dumb”, though since you mitigate it with “so far”, I can’t make my “hot take” too hot 😀

There’s a complaint by people that any time a problem in AI is solved, it’s slated as “no longer AI”. And there’s some truth to that, but also legitimacy that it’s “not AI” in the sense that people think of.

Go is a very, very hard problem for computers and people alike, but when they write a solver for it… that solver can’t readily be taught checkers, say, without more programming. There’s a kind of “meta-intelligence” that still seems to be missing, even with something like Watson playing such a human game as Jeopardy. These computers are smart, but they’re talented in the way a dolphin is a good swimmer or the spider a good spinner of webs vs a human who swims or knits; in this (strained) metaphor evolution is the programmer.

Anyway, one of the take aways from my reading and self-reflection is that the Zen crowd is right; there’s less “there” there to ourselves and our consciousness then we give our selves credit for… it can be a fun topic.

LikeLike

Oh, and fwiw, the “Chinese Room” is kind of a piece of junk. The “Systems Reply” pretty neatly refutes it, that if the room is really able to hold its own in a full conversation with a speaker of Chinese, than we can say the entire system of room plus operator speaks Chinese, even if we can’t pinpoint a single subpart of the system that “understands”; (the cheat is that that room would be of such a marvelous complexity that it’s completely ridiculous to think it can be operated by one human following instructions on little scraps of paper)

LikeLike

Much of this is a matter of degree. How different is it, conceptually, between understanding what a round object is versus a language. We talk about all the subtleties of language, but aren’t they learned? I believe that we have the technology, now it’s a matter of scale.

AI used to thought of as totally programed. In other words, you’d create an intelligent system from scratch. Now we’re creating an intelligent system in the same way as humans become intelligent.

LikeLike

And this just came up in my “this may interest you” list.

Sounds vaguely human to me. An AI-trained computer can have multiple areas of expertise. The sum is greater than the parts.

I always thought that wisdom was knowledge plus experience. I wonder at what point we’ll think that AI truly has wisdom.

You used an example of Go and then checkers, or any two disparate skills. A human would have to learn them as well and knowing one doesn’t necessarily imply knowledge of the other. But it did get me to thinking about decisions and what we call free will. A human can decide that he doesn’t like something and stops doing it. Or, a human can see something and decide that he/she wants to know more. I’ve seen arguments that what we call free will is a product of our knowledge, experience, environment, and biochemistry. That we really don’t have a choice, it just feels that way. I wonder as AI becomes more and more complex, if we don’t start seeing what, in a human, we would call free will.

LikeLike

It’s frustrating that it’s hard to get a feel for the new stuff as being that much faster, really.

The most tangible evidence seems to be how much better fonts are rendered these days – I think it goes beyond resolution and into having the processor cycles to spare, but when you seen a late-90s webpage screenshot, it’s striking how much the typography looks more like an 80s Mac than a modern retina screen.

But when I think of the photo processing I slog my way through, or the little toy games I used to write, the last 20 years doesn’t seem to have improved things that much. (Though it’s hilarious to see how fast even cheap modern hardware can emulate a mid 80s or even very early 90s home computer)

LikeLike

Actually, you mentioned graphics capabilities and that’s one area where there’s been a huge improvement. Especially for things like games, and any graphics processing, the more the computer can push onto the graphics processor, the better. I have photo processing apps that can make changes to photos in real time on my late-2014 Retina iMac. Those same apps would crawl on my 2009 MacBook Pro.

LikeLike

Fair enough. Making an operation like that dynamic and responsive vs churny is actually an interesting qualitative improvement. I guess I’m just bitter because when I set up ImageMagick to do some simple batch resizing it still seems to churn on my lil old Macbook Air 2013 😀

Getting back to graphics and games; I went to college in ’92 having begged my mom for a PC. “For college” but mostly to play Wing Commander. (BRILLIANT game). By 94 or so I was compelled to upgrade to a 486, to play X-wing and Wing Commander III, not to mention DOOM. And then in 95 we got the dorms wired for Internet, and these punk-ass freshman with their shiny Pentiums would play network “Duke Nuke’Em 3D” against me. My trust 486 would hold its own, roughly, until it got to the underwater level and some audio or visual effect processing that would make my display go from FPS to Seconds Per Frame.

What I’m saying is I switched to consoles for gaming shortly thereafter to get off that upgrade treadmill, and enjoyed the wonderland of N64 couch gaming etc. Sometimes I realize I miss out on some interesting things like hacking GTA Vice City, but I’ve alway preferred those controls to WASD/Mice anyway…

LikeLike

Ah, nostalgia. I’m 25 years before you. Maybe that’s why I have a somewhat different perspective. My first computer game was playing tic tac toe using a teletype machine. Actually, come to think of it, it was a learning program. You had a chance to beat it the first game, but after that, it was either a draw or, if you made a mistake, it won.

I don’t think there were any personal computers before the 70s when I was in university. In fact, I still remember when the electronic calculator finally got under $100. A lot of money in those days.

LikeLike